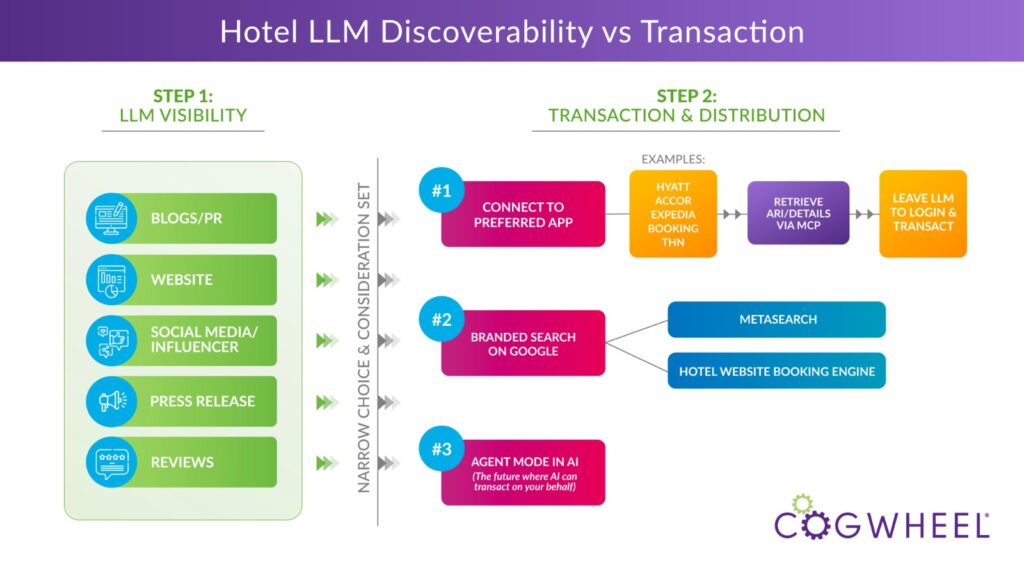

Not all AI investments for the hotel industry are equal. Many solutions focus on transaction infrastructure, but discoverability is often the gating factor for success. AI hotel distribution has two components: being recommended and being bookable. Most hotels should focus first on being recommendable before investing heavily in booking integrations.

NB: This is an article from Cogwheel, one of our Expert Partners

Subscribe to our weekly newsletter and stay up to date

It’s a classic case of hotel Marketing vs. Revenue Management.

Make sure your investment aligns with the desired outcome.

The Discoverability Component (The “Marketing” Side)

Think of this as the modern version of SEO. In this scenario, we want to know:

- Is the AI recommending your hotel?

- What is it saying about our hotel?

- How often are you appearing in topical prompts like

“best boutique hotels in downtown Denver for a girls weekend”?

Just like getting a hotel to rank on page one of Google, discoverability is about being an option in the consideration set, or were we surfaced as an option at all. The AI builds its “opinion” of your hotel based on:

- Own website with schema and good content

- Local blogs and media mentions

- Influencers and social media

- Third-party reviews

- Structured and unstructured backlinks & citations from credible sources

LLMs do not use backlinks as ranking signals in the same deterministic way Google does. Instead, it looks through consistent third-party coverage and entity mentions across credible sources that can influence its training. These references also, essentially, become a warehouse for data to be retrieved through connected search layers which ultimately shapes whether and how your property is recommended.

This isn’t just about your website or your schema markup. It learns from many places to determine when and if it will offer up your hotel as a suggestion. And if those mentions are not consistent and robust, you risk AI at best learning nothing about you because the data is off, or at worst creating “hallucinations” about you because it’s learning from inconsistent data.