Enterprise AI initiatives are at risk of failure, not because the models aren’t capable, but because they are being fueled by legacy, unstructured data dumps. What we’re witnessing isn’t a limitation of AI itself. It’s a failure of data readiness. Until organizations address that gap, hallucinations will continue to undermine even the most advanced systems.

NB: This is an article from Aggregate Intelligence, one of our Expert Partners

Subscribe to our weekly newsletter and stay up to date

This challenge is part of a broader shift in how we think about AI.

A shift is happening

As Kris Glabinski highlights in his article on AI hallucination, today’s systems can be remarkably powerful and dangerously wrong at the same time. His core point is clear: bad data doesn’t just reduce performance; it actively distorts outcomes.

Over the past two years, AI has become dramatically easier to deploy. Models are stronger, tooling is more mature, and agent-based systems are rapidly proliferating. But while intelligence has become more accessible, accuracy has not kept pace.

The problem isn’t the agent. It’s the data behind it.

Hallucinations are a data problem

Modern AI is a prediction engine. It generates responses that are fluent and probable, not guaranteed to be true. When the data it receives is incomplete or ambiguous, it fills in the gaps.

That is a hallucination.

When an AI is given raw datasets or static extracts, it sees values but not meaning. It does not understand what a number represents, how fields relate, or what context is missing. Without semantic clarity, it interpolates from patterns it has seen before and effectively guesses.

The output is not an obvious failure. It is a polished, confident answer that looks like an executive summary. It sounds right. It reads well. And it is often wrong.

This is what makes a hallucination so dangerous. They are persuasive.

Error rates can climb into the 15 to 30 percent range when models operate on poorly structured or context-light data. Not because the model is weak, but because the inputs are.

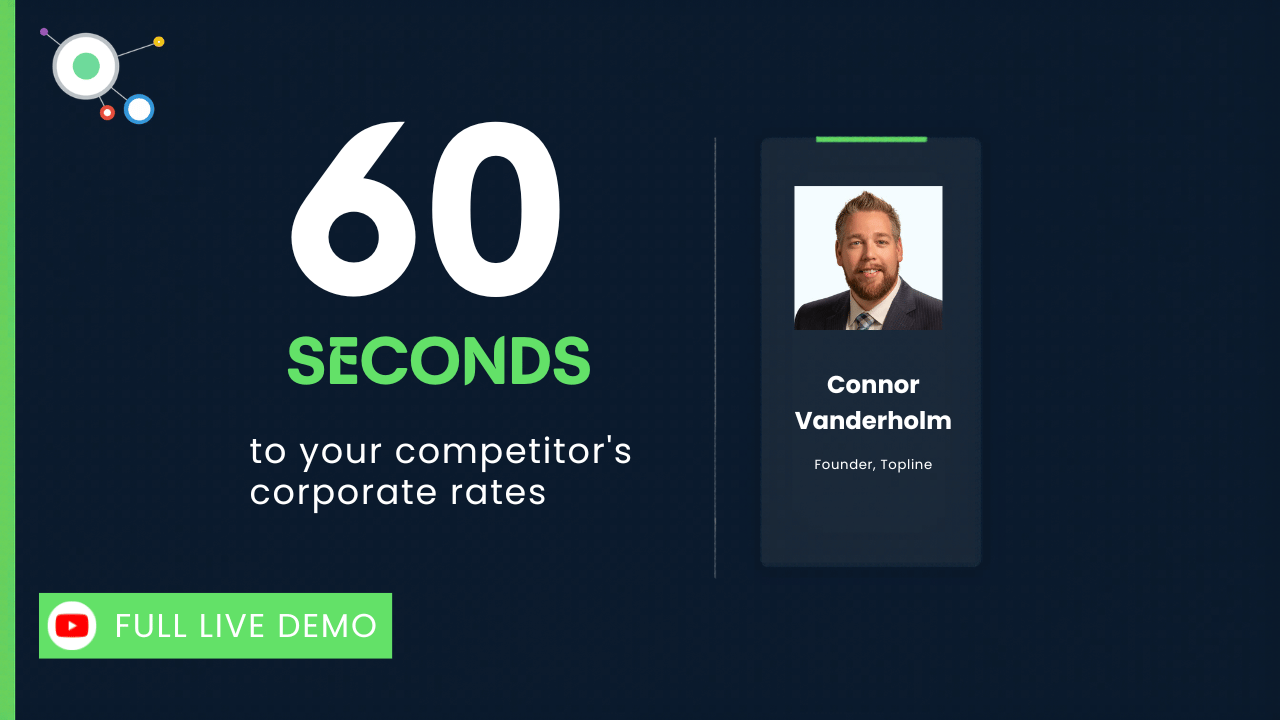

A simple example:

One of our clients recently asked an agent to recommend the best hotel rate.

The agent selected a $120 option as the best value. Clear, confident, and seemingly correct.

But the $120 excluded tax, was priced per night, and was non-refundable. A $135 option included tax, offered flexibility, and was a better room.

The agent did exactly what it was supposed to do. The data did not. And this is not an isolated case.