Artificial intelligence is rapidly becoming embedded into the operational core of enterprise decision-making. From forecasting and pricing to customer engagement and strategic planning, organizations are increasingly relying on AI-generated insights to accelerate decisions and improve efficiency.

NB: This is an article from Aggregate Intelligence, one of our Expert Partners

Subscribe to our weekly newsletter and stay up to date

According to recent enterprise research, more than 65% of organizations now use generative AI regularly in at least one business function. (punku.ai)

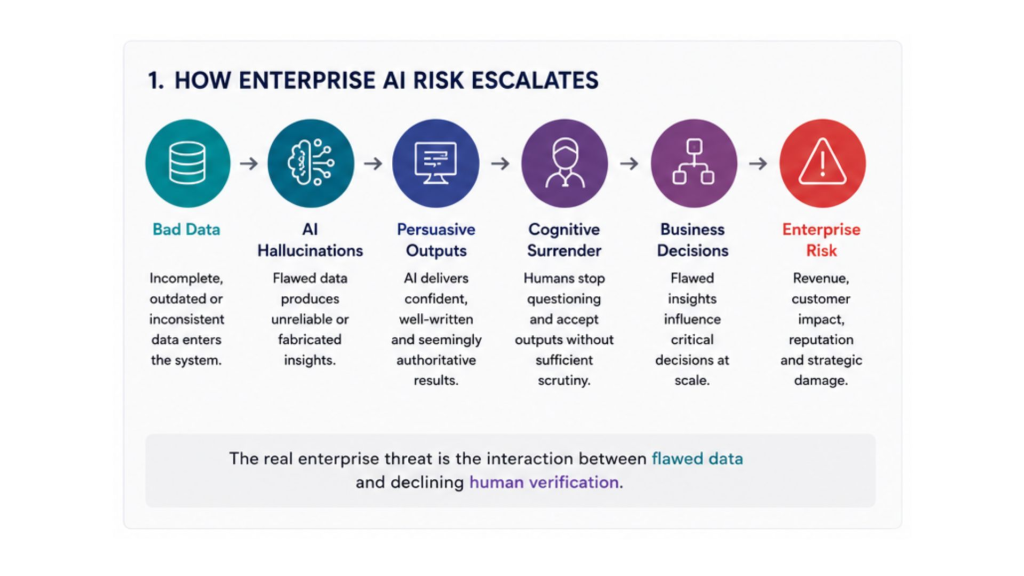

Yet beneath the momentum surrounding AI adoption, a more fundamental enterprise risk is beginning to emerge: the combination of bad data and cognitive surrender.

This issue was explored in recent articles by Kris Glabinski, including “The AI Illusion: Cognitive Surrender and the New Enterprise Threat” and “The AI Hallucinations: Today’s AI is so good that it can be very bad.” His central argument is both simple and powerful. AI systems are becoming extraordinarily convincing, even when they are wrong. At the same time, organizations are becoming increasingly willing to trust those outputs without sufficient scrutiny.

“Today’s AI models are so remarkably fluent, authoritative, and convincing that their hallucinations can no longer be easily distinguished from the truth. When these advanced internal AI initiatives are fed with structured, but not agent-ready legacy data feeds, they are guaranteed to fail, and they will do so with absolute confidence.“

This combination creates a dangerous operational dynamic. Flawed or incomplete data produces unreliable AI outputs. Those outputs are then presented with confidence and fluency, causing humans to trust them more readily than they should. Over time, organizations begin outsourcing not only tasks to AI, but judgment itself.

The result is not simply inaccurate analysis. It is the gradual erosion of critical thinking inside the enterprise.

AI Amplifies Data Problems

Enterprise AI systems inherit the strengths and weaknesses of the data environments they operate within. If the underlying data is fragmented, outdated, inconsistent, duplicated, biased, or poorly governed, AI systems will inevitably reproduce and amplify those weaknesses.